Vector projection on a plane

This is post #4 of a series discussing the expression (X'X)-1X'Y used in multiple linear regression. (Here are links to earlier posts: #1, #2, #3).

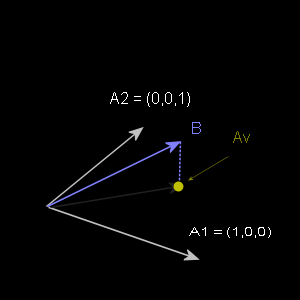

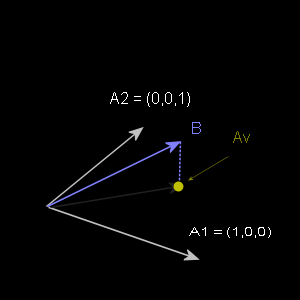

Last time we projected a 2D vector onto a 1D subspace (a line). This time we'll project a 3D vector onto a 2D subspace (a plane). In the image below, all vectors are 3D and B will be projected down onto the plane shared by A1 and A2. Again, Av is the point of projection, the result of the orthogonal projection of B on the plane.

Previously, we solved for a case in which (B - Av) and a single vector A were orthogonal (their dot product was zero). This time we need to solve for the case in which (B - Av) is orthogonal to two vectors, both A1 and A2. This will give us the point, Av, of the orthogonal projection of B onto the plane defined by A1 and A2. Given our need for a simultaneous solution to both linear equations, this is a job for linear algebra.

Multiplication of matrices amounts to calculating dot products of matrix rows and matrix columns--the rows come from the first matrix and the columns come from the second matrix.

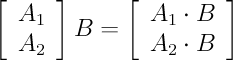

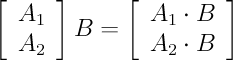

For example, if we put A1 in the first row of a matrix and A2 in the second row, we can use matrix multiplication to calculate the dot products A1 . B and A2 . B as follows:

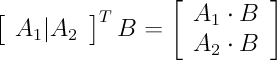

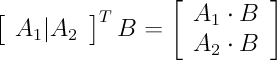

In order to get results in the form we expect to see, however, we'll put A1 and A2 into columns of the first matrix and multiply the transpose of this first matrix by B. This won't affect the calculations, just how things are displayed.

The following is mathematically equivalent to the previous equation (a matrix with A1 and A2 as row vectors is the same as the transpose of a matrix that has A1 and A2 as column vectors):

Note: I've added a vertical bar between A1 and A2 to indicate they are column vectors of the matrix.

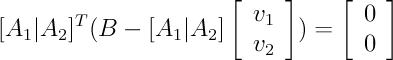

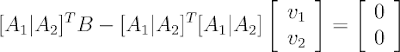

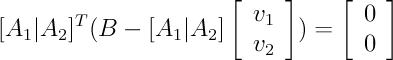

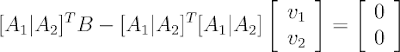

In order to find the projection of vector B onto the plane, we need to solve for v1 where A1 . (B - A1 * v1) = 0 and we need to solve for v2 where A2 . (B - A2 * v2) = 0. We can express both linear equations together in the following matrix equation:

It may be helpful to refer back to the previous post, because this example is designed to mirror the previous simpler example as much as possible. In the previous post, we solved a dot product equation. We're doing the same thing here, except we have two dot product equations this time, and we're using linear algebra to solve them both simultaneously.

Just as we used the distributive property of algebra before, here we use the distributive property of matrices to get A . B - A . A v:

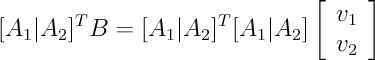

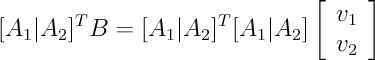

Add A . Av to both sides:

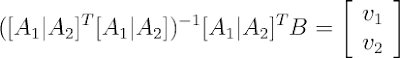

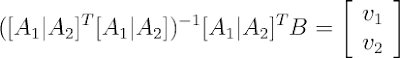

Again, we multiply both sides by the inverse of A . A (but this time we use a matrix inverse):

At this point, it will be probably be a little clearer if we simply use 'A' to refer to the matrix with column vectors A1 and A2 (and V for the matrix containing v1 an v2):

The point of this series of posts is explaining the story behind the (X'X)-1 X'Y found in multiple linear regression. The expression above is the same except X and Y have been replaced with A and B to avoid confusion with Cartesian coordinates. Half the story is multiple linear regression finds its solution through a vector projection. We'll explore this in greater depth in the next post.

Finally, as was the case in the previous post, we need to multiply A by V to get the projection of vector B onto the plane defined by A1 and A2.

As before, there are bonus prizes. Now you know how to find the distance from a point to a plane and exactly where the nearest point on the plane lies. Furthermore, because we've the projection problem into the world of linear algebra, the same equations work in higher dimensions (4D, 5D and beyond).

Next time, we'll investigate the relationship between vector projection and multiple linear regression in greater detail.

Last time we projected a 2D vector onto a 1D subspace (a line). This time we'll project a 3D vector onto a 2D subspace (a plane). In the image below, all vectors are 3D and B will be projected down onto the plane shared by A1 and A2. Again, Av is the point of projection, the result of the orthogonal projection of B on the plane.

Previously, we solved for a case in which (B - Av) and a single vector A were orthogonal (their dot product was zero). This time we need to solve for the case in which (B - Av) is orthogonal to two vectors, both A1 and A2. This will give us the point, Av, of the orthogonal projection of B onto the plane defined by A1 and A2. Given our need for a simultaneous solution to both linear equations, this is a job for linear algebra.

Multiplication of matrices amounts to calculating dot products of matrix rows and matrix columns--the rows come from the first matrix and the columns come from the second matrix.

For example, if we put A1 in the first row of a matrix and A2 in the second row, we can use matrix multiplication to calculate the dot products A1 . B and A2 . B as follows:

In order to get results in the form we expect to see, however, we'll put A1 and A2 into columns of the first matrix and multiply the transpose of this first matrix by B. This won't affect the calculations, just how things are displayed.

The following is mathematically equivalent to the previous equation (a matrix with A1 and A2 as row vectors is the same as the transpose of a matrix that has A1 and A2 as column vectors):

Note: I've added a vertical bar between A1 and A2 to indicate they are column vectors of the matrix.

In order to find the projection of vector B onto the plane, we need to solve for v1 where A1 . (B - A1 * v1) = 0 and we need to solve for v2 where A2 . (B - A2 * v2) = 0. We can express both linear equations together in the following matrix equation:

It may be helpful to refer back to the previous post, because this example is designed to mirror the previous simpler example as much as possible. In the previous post, we solved a dot product equation. We're doing the same thing here, except we have two dot product equations this time, and we're using linear algebra to solve them both simultaneously.

Just as we used the distributive property of algebra before, here we use the distributive property of matrices to get A . B - A . A v:

Add A . Av to both sides:

Again, we multiply both sides by the inverse of A . A (but this time we use a matrix inverse):

At this point, it will be probably be a little clearer if we simply use 'A' to refer to the matrix with column vectors A1 and A2 (and V for the matrix containing v1 an v2):

The point of this series of posts is explaining the story behind the (X'X)-1 X'Y found in multiple linear regression. The expression above is the same except X and Y have been replaced with A and B to avoid confusion with Cartesian coordinates. Half the story is multiple linear regression finds its solution through a vector projection. We'll explore this in greater depth in the next post.

Finally, as was the case in the previous post, we need to multiply A by V to get the projection of vector B onto the plane defined by A1 and A2.

As before, there are bonus prizes. Now you know how to find the distance from a point to a plane and exactly where the nearest point on the plane lies. Furthermore, because we've the projection problem into the world of linear algebra, the same equations work in higher dimensions (4D, 5D and beyond).

Next time, we'll investigate the relationship between vector projection and multiple linear regression in greater detail.

6 Comments:

Very nice write up. But you dropped a transpose in the next to last equation. It is just a typo as the transpose is in the previous equation.

Feel free to delete this when you fix it.

Cheers,

Rod

Thanks, Rod! The last two equations have been updated to include the missing transpose.

Hi.

I am confused, and need, quite desperately, to know the answer.

A_1 and A_2 are 1 x 3 matrices.

A is a (2 x 3) matrix

AA' (A x Atranspose) is not square.

Non-square matrices have no inverse.

How does this equation work - can you provide an example?

Many thanks,

Andrew

Hi, Andrew.

A matrix multiplied by its transpose always produces a square result.

First let's consider the dimensionality of a matrix product. Consider the following matrix product:

C = A * B

The result C necessarily has the same number of rows as A and the same number of columns as B.

Also, a precondition for matrix multiplication is that the number of columns in A is equal to the number of rows in B. As long is this requirement is met, matrices can be multiplied.

When we multiply X by its transpose, this condition must be met because the rows of X and the columns of the X transpose are one and the same.

Considering the previous example (C = A*B), we know that the product of X and X transpose must contain the same number of rows as X and the same number of columns as X transpose, and since the columns of X transpose are the rows of X, the product of X and X transpose must be a square matrix of dimensions m by m where m is the number of rows in X.

you are a lifesaver. thanks for this!!

It was really useful for me.

Thanx a lot

Post a Comment

Subscribe to Post Comments [Atom]

<< Home